How Lewisham and Greenwich NHS Trust Built a Structured Approach to Clinical AI

Using deepcOS®, the trust compared fracture detection solutions in a structured way and created a stronger foundation for future AI adoption

.jpg)

Clinical AI adoption is not just about choosing the right solution. For most Trusts, the greater challenge is building confidence among clinical, operational, governance, and executive teams in how AI will be evaluated, adopted, and used.

At Lewisham and Greenwich NHS Trust, the challenge started with a clear problem. The Trust was facing high demand, limited reporting capacity, and delays that could affect patient pathways. In clinical workflows, those delays had real consequences, from later fracture confirmation and added pressure in emergency care to patient recalls and avoidable strain across the wider organisation.

This is what makes the Trust’s experience useful beyond a single deployment: it offers a practical model other NHS organisations can learn from as they navigate their own approach to clinical AI.

For Dr Sarojini David, AI Lead, Clinical Director for Radiology Divisional Medical Information Officer at Lewisham and Greenwich NHS Trust, the need was obvious. “As you know, the entire NHS has a shortage of workload and specifically in radiology, there is a significant workload,” she said. “We are unable to meet our current demand.”

For Steven Thorndyke, Director of IT at Lewisham and Greenwich NHS Trust, the challenge also had an organisational dimension. Any AI project would need to support a real use case, fit clinical workflows, and stand up to internal scrutiny as a serious investment.

Lewisham and Greenwich NHS Trust did not simply adopt a fracture detection solution. They built a more structured way to evaluate clinical AI, align stakeholders, and move from interest to implementation with greater confidence.

Starting with a real need, not with AI for its own sake

The Trust began with a defined problem: Reporting delays were affecting clinical flow and patient experience. Emergency teams were waiting longer, and the knock-on effects reached diagnosis, treatment, complaints, and cost.

“We had to go in for innovation,” said Dr David. “We had to pick up the AI technology to help with our workload and improve our diagnostic processes.”

That clarity shaped the whole project. Fracture detection was not chosen because it was fashionable. It was chosen because it addressed a specific operational and clinical need that the trust already understood.

Thorndyke made the same point from the IT side. “Start with a clear problem statement,” he said. “Is there a real, valid use case?”

Because the use case was clearly defined, the Trust could focus on whether a solution would work in its own environment rather than respond to generic claims.

Why the Trust chose comparison over a single vendor route

Once the use case was defined, the next question was how to choose the right solution.

There were multiple tools on the market, and the Trust wanted a more rigorous and consistent way to compare them. They did not want to rely on one vendor conversation or one set of product claims to make a high-stakes decision.

“We wanted to get the best of the solutions available,” said Dr David. “Each solution can vary significantly between its performance metrics, sensitivity, and specificity, but we also wanted to identify which solution was most compatible with our workflow.”

That distinction matters. The Trust was not only asking which solution performed best. They were also asking which tool fits their workflow, which one stakeholders could use safely, which one would create less friction in implementation, and which one the organisation could govern confidently over time.

Thorndyke was equally direct about the need for comparison. “Comparing multiple AI solutions was really essential,” he said. “You’ve got to have a standard way to do it.”

The Trust was not looking for a sales pitch. They were looking for a decision-making process that could be defended internally.

How deepcOS® helped turn comparison into a workable process

Rather than handling separate vendor processes one by one, Lewisham and Greenwich NHS Trust used deepcOS® AI Evaluator to compare fracture detection solutions through a more unified process. That gave the team a clearer way to assess options against the same criteria while reducing the burden of fragmented governance, integration, and monitoring work.

For the Trust, this was about more than convenience. deepcOS® enabled a structured, repeatable evaluation process, made comparison more consistent and helped create a more manageable path from assessment to deployment.

Dr David was clear about why that mattered. A platform-based approach made it easier to compare solutions without introducing unnecessary bias and avoided the added complexity of assessing different vendors separately across governance, regulatory compliance, integration, data privacy, and workflow fit.

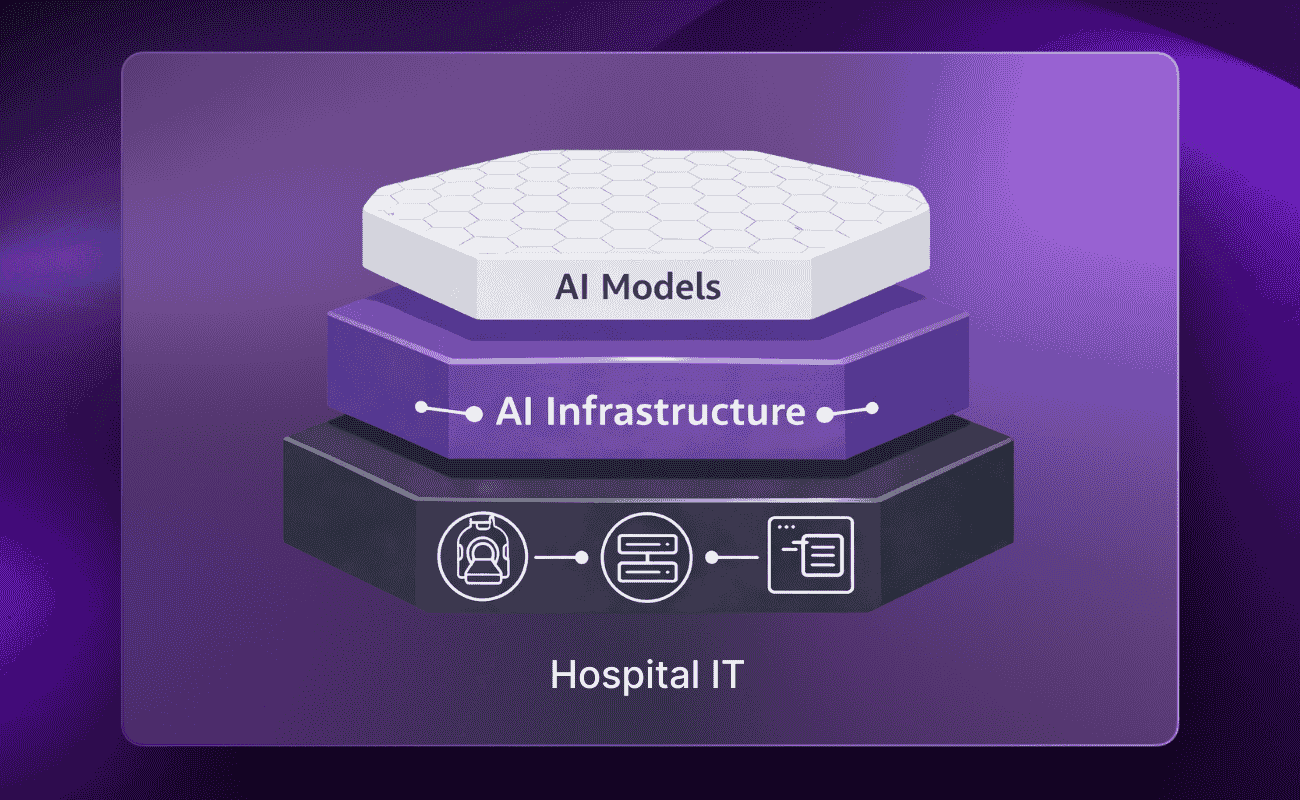

The value of deepcOS® in this case was not only access. It was the infrastructure.

Why Trust-wide confidence mattered as much as model performance

This project was not only about assessing technology. It was about building confidence across the organisation.

That confidence had to exist at several levels: clinical confidence that the solution could support decision-making safely, operational confidence that it could fit the workflow, governance confidence that the risks were understood, and executive confidence that the project had enough structure to justify adoption.

The trust involved radiology, emergency care, IT, PACS, governance, and clinical safety stakeholders. They created feedback loops, ran surveys, built a standard operating procedure, held weekly review meetings, and kept human oversight central.

“Safety of the AI solution was one of the priorities,” said Dr David.

That priority shaped the process from the beginning. The project was built around formal oversight and structured review, including British standards, the Duke University oversight model, NICE guidance, AI board review, clinical safety involvement, hazard logs, and post-deployment monitoring.

Thorndyke made the same principle clear from the IT side: “This isn’t just a technical project; it should have clinical oversight.”

What made the project work was not simply that the Trust found a useful solution. They created a process that other stakeholders could trust.

Turning a first AI deployment into a change process

Because this was one of the Trust’s early AI deployments, the challenge was not only technical.

Teams needed to understand how the solution would be used. They needed clarity on roles and responsibilities. They needed reassurance that AI was there to support decision-making, not replace clinical judgment. They also needed a way to raise concerns, review discrepancies, and see how learning from the pilot would feed back into improvement.

The trust addressed that directly. Training sessions were built into the process. Sentiment surveys were used to capture how teams felt about the deployment. The standard operating procedure made responsibilities clearer. Weekly meetings gave stakeholders a place to discuss issues openly. Feedback loops helped move the project from cautious interest to practical use.

Thorndyke acknowledged that this kind of adoption takes work. “Some teams were nervous about it,” he said. “You’ve just got to bring everyone on the journey.” He also stressed that “the human makes the decision.”

Dr David described this as a steep learning curve for the entire team. That is part of what makes the project useful for other Trusts: it shows what careful adoption can look like inside a real organisation.

What the evaluation looked at in practice

The trust ultimately narrowed its comparison to two fracture detection solutions.

The final assessment reflected how the solution would actually be used, not just how it performed in isolation. Emergency department teams and radiology teams both contributed, helping ensure that the decision was grounded in real clinical use.

In the end, one solution performed better overall and was selected for pilot deployment. The decision was informed by a combination of sensitivity and specificity, interface quality, workflow compatibility, and the likely effect of overcalling or undercalling on patient care.

A solution can perform well in theory and still fail in a hospital environment if it creates workflow friction or introduces a different kind of risk. Lewisham and Greenwich NHS Trust built its comparison process to surface those issues early.

Making the business case more defensible

This project also carried a business case dimension that should not be overlooked.

Thorndyke was explicit about that in the interview. “Implementing AI solutions is so new, you haven’t got all the previous evidence from other Trusts,” he said. “It’s essential to have a pilot to prove value, especially to our finance colleagues.”

That is one reason the comparative approach mattered so much.

By creating evidence through a defined pilot, consistent comparison, and cross-functional input, the Trust made the decision easier to support beyond radiology alone. The work was not just clinically useful. It also gave the organisation a stronger way to explain why this project should move forward and how future projects could be assessed.

Many AI projects stall not because the clinical problem is unclear, but because the organisation lacks enough evidence, alignment, or governance confidence to support the next step. Lewisham and Greenwich NHS Trust used this project to build that internal case more effectively.

What changed for clinicians and patients

The selected fracture detection solution was introduced as a support for decision-making, not as a replacement for radiologist judgment.

Dr David described the impact as meaningful for both clinicians and patients. The project helped improve confidence, supported faster decisions, and reduced friction in the journey from imaging to care.

For emergency department teams, the value came from getting a faster second read when formal reporting was delayed. That gave clinicians more support at the point of care and helped reduce delays between imaging and action.

For patients, the effect was more immediate and practical. Faster support could help reduce avoidable waiting, reduce unnecessary returns, and improve the pathway from image to treatment decision.

The project also changed the way teams worked together. Because radiology, ED, IT, PACS, governance, and safety teams were all involved, the trust was not just deploying a solution. They were building a stronger internal model for how future AI projects could be introduced and managed.

Both speakers describe this work as a starting point rather than a finish line.

That is what makes the story especially relevant for other trusts.

The biggest output of the project was not only one deployed use case. It was the creation of organisational capability. Lewisham and Greenwich NHS Trust now has a clearer governance pathway, a baseline operating model, a working SOP, and a better understanding of how to compare solutions, involve stakeholders, and manage monitoring after go-live.

That progression matters. The trust moved from identifying a single operational problem to running a structured comparison, to building governance and stakeholder alignment, to creating a model that it can now apply to future AI projects.

That means the second and third projects do not have to start from zero.

A first deployment, if handled well, does more than solve one problem. It creates policy, governance, and operational capability for what comes next.

Thorndyke described that shift clearly: “Having an established governance framework now enables us as a Trust to kind of evaluate future AI solutions.”

In the interview, Dr David and Thorndyke point to future areas, including chest X-rays, CT brain, and mammography, as well as wider collaboration across South East London. That future orientation matters. It shows that the trust does not see this as a one-off implementation. It sees it as the foundation for a broader and more confident AI strategy.

What other NHS trusts can learn from this approach

Lewisham and Greenwich NHS Trust’s advice to peers is practical: start with a real need, define the use case clearly, involve the right stakeholders early, and keep the work clinically led. Do not separate technical evaluation from governance, safety, and workflow assessment. Build a process that teams across the organisation can understand and support.

Thorndyke’s summary is as direct as it is useful: “Start with a clear problem statement.” “Make sure it’s clinically led; it should be driven by the clinicians, not from an IT aspect.”

Just as important, plan for what happens after go-live. AI adoption is not complete once a solution is switched on. It has to be reviewed, governed, and improved in use.

It is not just a story about fracture detection. It is a practical example of how one trust built the confidence, structure, and internal alignment needed to evaluate and adopt clinical AI more effectively.

Using deepcOS®, Lewisham and Greenwich NHS Trust created a more manageable path from evaluation to implementation and a stronger base for future projects. More importantly, this first project created a reusable path that the trust can build on as it evaluates and adopts future AI solutions.

Attend the upcoming webinar to hear how Lewisham and Greenwich NHS Trust approached comparative evaluation, governance, and rollout planning for clinical AI in practice.

.avif)

.jpg)

.png)